Ionic Tutorial#

This tutorial is a simple video-call application built with Ionic, using Angular and Capacitor, that allows:

- Joining a video call room by requesting a token from any application server.

- Publishing your camera and microphone.

- Subscribing to all other participants' video and audio tracks automatically.

- Leaving the video call room at any time.

It uses the LiveKit JS SDK to connect to the LiveKit server and interact with the video call room.

Running this tutorial#

1. Run LiveKit Server#

You can run LiveKit locally or you can use their free tier of LiveKit Cloud.

Alternatively, you can use OpenVidu, which is a fully compatible LiveKit distribution designed specifically for on-premises environments. It brings notable improvements in terms of performance, observability and development experience. For more information, visit What is OpenVidu?.

-

Download OpenVidu

-

Configure the local deployment

-

Run OpenVidu

To use a production-ready OpenVidu deployment, visit the official OpenVidu deployment guide.

Configure Webhooks

All application servers have an endpoint to receive webhooks from LiveKit. For this reason, when using a production deployment you need to configure webhooks to point to your local application server in order to make it work. Check the Send Webhooks to a Local Application Server section for more information.

Follow the official instructions to run LiveKit locally.

Configure Webhooks

All application servers have an endpoint to receive webhooks from LiveKit. For this reason, when using LiveKit locally you need to configure webhooks to point to your application server in order to make it work. Check the Webhooks section from the official documentation and follow the instructions to configure webhooks.

Use your account in LiveKit Cloud.

Configure Webhooks

All application servers have an endpoint to receive webhooks from LiveKit. For this reason, when using LiveKit Cloud you need to configure webhooks to point to your local application server in order to make it work. Check the Webhooks section from the official documentation and follow the instructions to configure webhooks.

Expose your local application server

In order to receive webhooks from LiveKit Cloud on your local machine, you need to expose your local application server to the internet. Tools like Ngrok, LocalTunnel, LocalXpose and Zrok can help you achieve this.

These tools provide you with a public URL that forwards requests to your local application server. You can use this URL to receive webhooks from LiveKit Cloud, configuring it as indicated above.

2. Download the tutorial code#

3. Run a server application#

To run this server application, you need Node.js installed on your device.

- Navigate into the server directory

- Install dependencies

- Run the application

For more information, check the Node.js tutorial.

To run this server application, you need Go installed on your device.

- Navigate into the server directory

- Run the application

For more information, check the Go tutorial.

To run this server application, you need Ruby installed on your device.

- Navigate into the server directory

- Install dependencies

- Run the application

For more information, check the Ruby tutorial.

To run this server application, you need Java and Maven installed on your device.

- Navigate into the server directory

- Run the application

For more information, check the Java tutorial.

To run this server application, you need Python 3 installed on your device.

-

Navigate into the server directory

-

Create a python virtual environment

-

Activate the virtual environment

-

Install dependencies

-

Run the application

For more information, check the Python tutorial.

To run this server application, you need Rust installed on your device.

- Navigate into the server directory

- Run the application

For more information, check the Rust tutorial.

To run this server application, you need PHP and Composer installed on your device.

- Navigate into the server directory

- Install dependencies

- Run the application

Warning

LiveKit PHP SDK requires library BCMath. This is available out-of-the-box in PHP for Windows, but a manual installation might be necessary in other OS. Run sudo apt install php-bcmath or sudo yum install php-bcmath

For more information, check the PHP tutorial.

To run this server application, you need .NET installed on your device.

- Navigate into the server directory

- Run the application

Warning

This .NET server application needs the LIVEKIT_API_SECRET env variable to be at least 32 characters long. Make sure to update it here and in your LiveKit Server.

For more information, check the .NET tutorial.

4. Run the client application#

To run the client application tutorial, you need Node.js installed on your development computer.

-

Navigate into the application client directory:

-

Install the required dependencies:

-

Serve the application:

You have two options for running the client application: browser-based or mobile device-based:

To run the application in a browser, you will need to start the Ionic server. To do so, run the following command:

Once the server is up and running, you can test the application by visiting

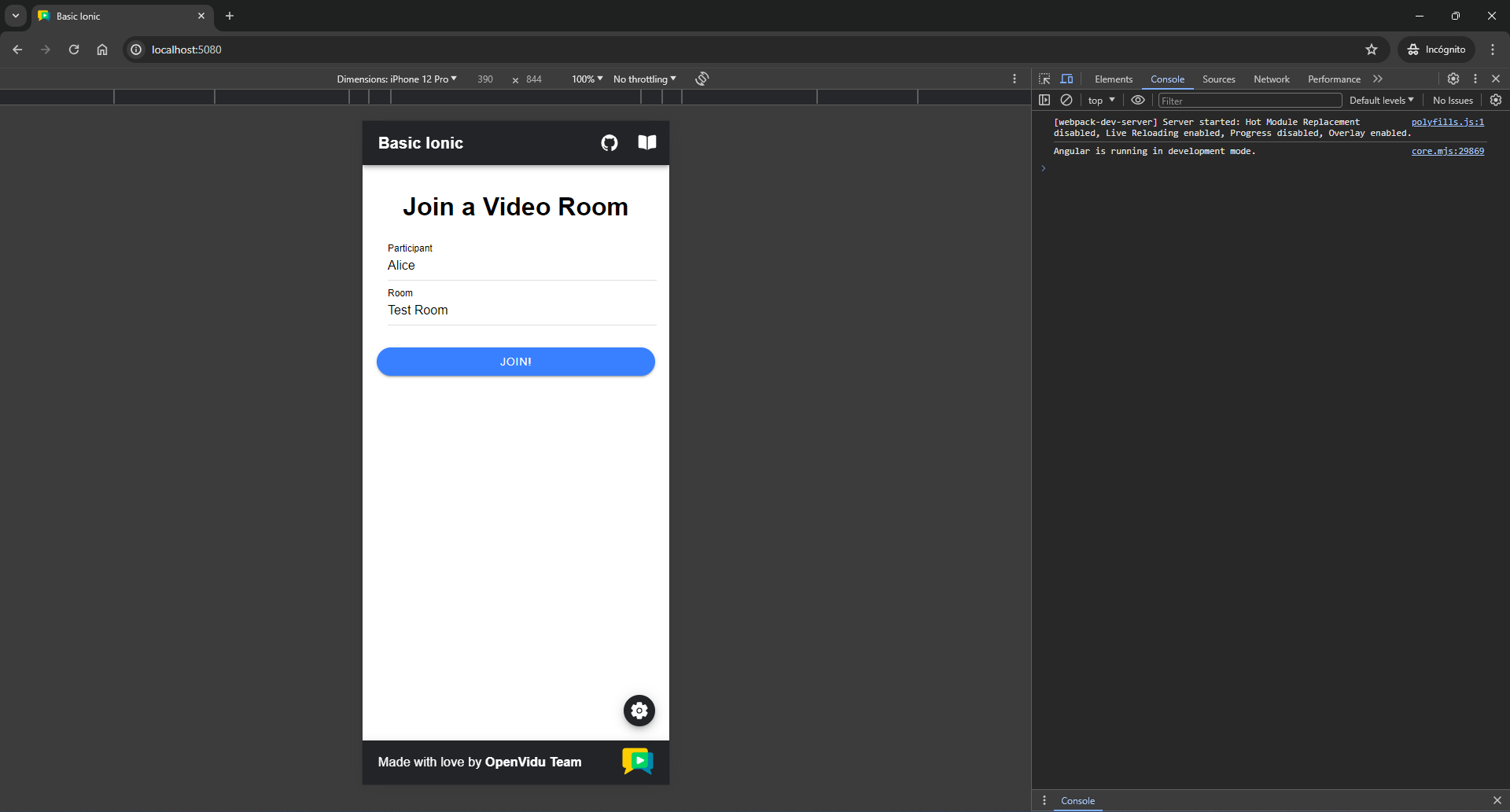

http://localhost:5080. You should see a screen like this:Mobile appearance

To show the app with a mobile device appearance, open the dev tools in your browser and find the button to adapt the viewport to a mobile device aspect ratio. You may also choose predefined types of devices to see the behavior of your app in different resolutions.

Accessing your application client from other devices in your local network

One advantage of running OpenVidu locally is that you can test your application client with other devices in your local network very easily without worrying about SSL certificates.

Access your application client through

https://xxx-yyy-zzz-www.openvidu-local.dev:5443, wherexxx-yyy-zzz-wwwpart of the domain is your LAN private IP address with dashes (-) instead of dots (.). For more information, see section Accessing your app from other devices in your network.Running the tutorial on a mobile device presents additional challenges compared to running it in a browser, mainly due to the application being launched on a different device, such as an Android smartphone or iPhone, rather than our computer. To overcome these challenges, the following steps need to be taken:

-

Localhost limitations:

The usage of

localhostin our Ionic app is restricted, preventing seamless communication between the application client and the server. -

Serve over local network:

The application must be served over our local network to enable communication between the device and the server.

-

Secure connection requirement for WebRTC API:

The WebRTC API demands a secure connection for functionality outside of localhost, necessitating the serving of the application over HTTPS.

If you run OpenVidu locally you don't need to worry about this. OpenVidu will handle all of the above requirements for you. For more information, see section Accessing your app from other devices in your network.

Now, let's explore how to run the application on a mobile device:

Requirements

Before running the application on a mobile device, make sure that the device is connected to the same network as your PC and the mobile is connected to the PC via USB or Wi-Fi.

The script will ask you for the device you want to run the application on. You should select the real device you have connected to your computer.

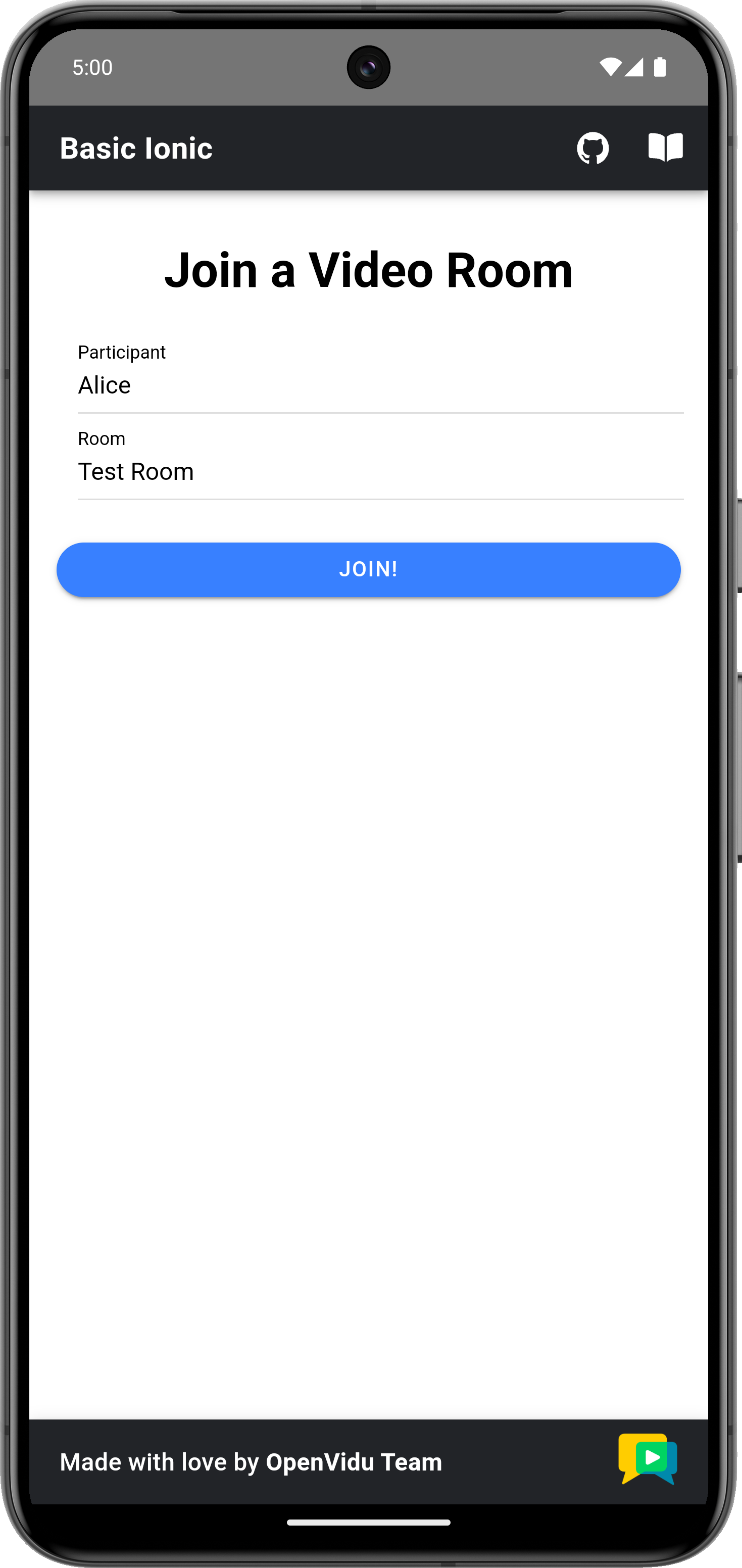

Once the mobile device has been selected, the script will launch the application on the device and you will see a screen like this:

This screen allows you to configure the URLs of the application server and the LiveKit server. You need to set them up for requesting tokens to your application server and connecting to the LiveKit server.

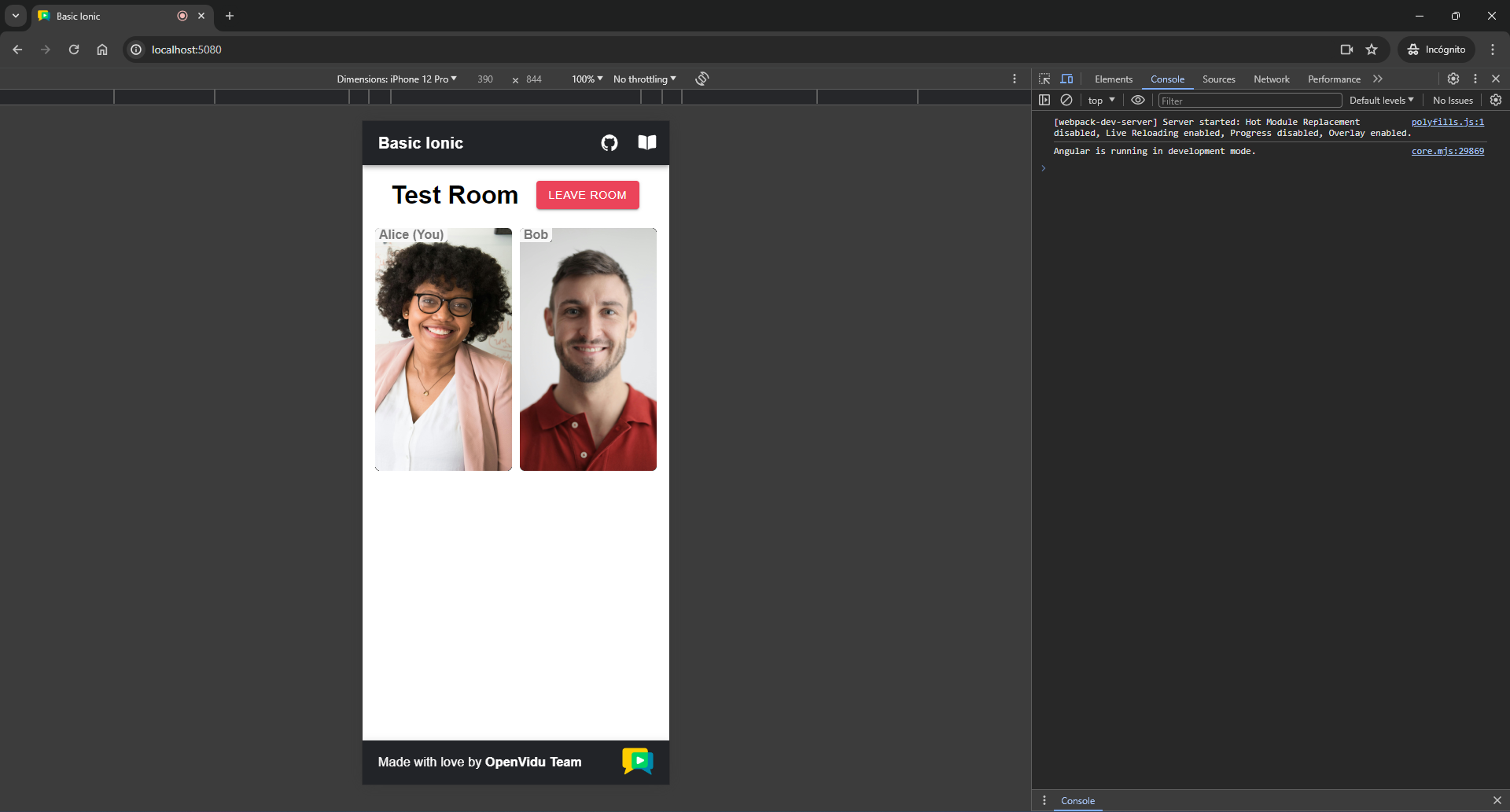

Once you have configured the URLs, you can join a video call room by providing a room name and a user name. After joining the room, you will be able to see your own video and audio tracks, as well as the video and audio tracks of the other participants in the room.

-

Understanding the code#

This Ionic project has been created using the Ionic CLI tool. You may come across various configuration files and other items that are not essential for this tutorial. Our focus will be on the key files located within the src/app/ directory:

app.component.ts: This file defines theAppComponent, which serves as the main component of the application. It is responsible for handling tasks such as joining a video call and managing the video calls themselves.app.component.html: This HTML file is associated with theAppComponent, and it dictates the structure and layout of the main application component.app.component.scss: The CSS file linked toAppComponent, which controls the styling and appearance of the application's main component.VideoComponent: Component responsible for displaying video tracks along with participant's data. It is defined in thevideo.component.tsfile within thevideodirectory, along with its associated HTML and CSS files.AudioComponent: Component responsible for displaying audio tracks. It is defined in theaudio.component.tsfile within theaudiodirectory, along with its associated HTML and CSS files.

To use the LiveKit JS SDK in an Ionic application, you need to install the livekit-client package. This package provides the necessary classes and methods to interact with the LiveKit server. You can install it using the following command:

Now let's see the code of the app.component.ts file:

| app.component.ts | |

|---|---|

| |

TrackInfotype, which groups a track publication with the participant's identity.- The URL of the application server.

- The URL of the LiveKit server.

- Angular component decorator that defines the

AppComponentclass and associates the HTML and CSS files with it. - The

roomFormobject, which is a form group that contains theroomNameandparticipantNamefields. These fields are used to join a video call room. - The

urlsFormobject, which is a form group that contains theserverUrlandlivekitUrlfields. These fields are used to configure the application server and LiveKit URLs. - The room object, which represents the video call room.

- The local video track, which represents the user's camera.

- Map that links track SIDs with

TrackInfoobjects. This map is used to store remote tracks and their associated participant identities. - A boolean flag that indicates whether the user is configuring the application server and LiveKit URLs.

The app.component.ts file defines the following variables:

APPLICATION_SERVER_URL: The URL of the application server. This variable is used to make requests to the server to obtain a token for joining the video call room.LIVEKIT_URL: The URL of the LiveKit server. This variable is used to connect to the LiveKit server and interact with the video call room.roomForm: A form group that contains theroomNameandparticipantNamefields. These fields are used to join a video call room.urlsForm: A form group that contains theserverUrlandlivekitUrlfields. These fields are used to configure the application server and LiveKit URLs.room: The room object, which represents the video call room.localTrack: The local video track, which represents the user's camera.remoteTracksMap: A map that links track SIDs withTrackInfoobjects. This map is used to store remote tracks and their associated participant identities.settingUrls: A boolean flag that indicates whether the user is configuring the application server and LiveKit URLs.

Configuring URLs#

When running OpenVidu locally and launching the app in a web browser, leave APPLICATION_SERVER_URL and LIVEKIT_URL variables empty. The function configureUrls() will automatically configure them with default values. However, for other deployment type or when launching the app in a mobile device, you should configure these variables with the correct URLs depending on your deployment.

In case you are running OpenVidu locally and launching the app in a mobile device, you can set the applicationServerUrl to https://xxx-yyy-zzz-www.openvidu-local.dev:6443 and the livekitUrl to wss://xxx-yyy-zzz-www.openvidu-local.dev:7443, where xxx-yyy-zzz-www part of the domain is the LAN private IP address of the machine running OpenVidu, with dashes (-) instead of dots (.).

If you leave them empty and app is launched in a mobile device, the user will be prompted to enter the URLs when the application starts:

Joining a Room#

After the user specifies their participant name and the name of the room they want to join, when they click the Join button, the joinRoom() method is called:

| app.component.ts | |

|---|---|

| |

- Initialize a new

Roomobject. - Event handling for when a new track is received in the room.

- Event handling for when a track is destroyed.

- Get the room name and participant name from the form.

- Get a token from the application server with the room name and participant name.

- Connect to the room with the LiveKit URL and the token.

- Publish your camera and microphone.

The joinRoom() method performs the following actions:

-

It creates a new

Roomobject. This object represents the video call room.Info

When the room object is defined, the HTML template is automatically updated hiding the "Join room" page and showing the "Room" layout.

-

Event handling is configured for different scenarios within the room. These events are fired when new tracks are subscribed to and when existing tracks are unsubscribed.

-

RoomEvent.TrackSubscribed: This event is triggered when a new track is received in the room. It manages the storage of the new track in theremoteTracksMap, which links track SIDs withTrackInfoobjects containing the track publication and the participant's identity. -

RoomEvent.TrackUnsubscribed: This event occurs when a track is destroyed, and it takes care of removing the track from theremoteTracksMap.

These event handlers are essential for managing the behavior of tracks within the video call. You can further extend the event handling as needed for your application.

Take a look at all events

You can take a look at all the events in the Livekit Documentation

-

-

It retrieves the room name and participant name from the form.

-

It requests a token from the application server using the room name and participant name. This is done by calling the

getToken()method:app.component.ts /** * -------------------------------------------- * GETTING A TOKEN FROM YOUR APPLICATION SERVER * -------------------------------------------- * The method below request the creation of a token to * your application server. This prevents the need to expose * your LiveKit API key and secret to the client side. * * In this sample code, there is no user control at all. Anybody could * access your application server endpoints. In a real production * environment, your application server must identify the user to allow * access to the endpoints. */ async getToken(roomName: string, participantName: string): Promise<string> { const response = await lastValueFrom( this.httpClient.post<{ token: string }>(APPLICATION_SERVER_URL + 'token', { roomName, participantName }) ); return response.token; }This function sends a POST request using HttpClient to the application server's

/tokenendpoint. The request body contains the room name and participant name. The server responds with a token that is used to connect to the room. -

It connects to the room using the LiveKit URL and the token.

- It publishes the camera and microphone tracks to the room using

room.localParticipant.enableCameraAndMicrophone(), which asks the user for permission to access their camera and microphone at the same time. The local video track is then stored in thelocalTrackvariable.

Displaying Video and Audio Tracks#

In order to display participants' video and audio tracks, the app.component.html file integrates the VideoComponent and AudioComponent.

| app.component.html | |

|---|---|

| |

This code snippet does the following:

-

We use the Angular

@ifblock to conditionally display the local video track using theVideoComponent. Thelocalproperty is set totrueto indicate that the video track belongs to the local participant.Info

The audio track is not displayed for the local participant because there is no need to hear one's own audio.

-

Then, we use the Angular

@forblock to iterate over theremoteTracksMap. For each remote track, we create aVideoComponentor anAudioComponentdepending on the track's kind (video or audio). TheparticipantIdentityproperty is set to the participant's identity, and thetrackproperty is set to the video or audio track. Thehiddenattribute is added to theAudioComponentto hide the audio tracks from the layout.

Let's see now the code of the video.component.ts file:

| video.component.ts | |

|---|---|

| |

- Angular component decorator that defines the

VideoComponentclass and associates the HTML and CSS files with it. - The reference to the video element in the HTML template.

- The video track object, which can be a

LocalVideoTrackor aRemoteVideoTrack. - The participant identity associated with the video track.

- A boolean flag that indicates whether the video track belongs to the local participant.

- Attach the video track to the video element when the track is set.

- Detach the video track when the component is destroyed.

The VideoComponent does the following:

-

It defines the properties

track,participantIdentity, andlocalas inputs of the component:track: The video track object, which can be aLocalVideoTrackor aRemoteVideoTrack.participantIdentity: The participant identity associated with the video track.local: A boolean flag that indicates whether the video track belongs to the local participant. This flag is set tofalseby default.

-

It creates a reference to the video element in the HTML template.

- It attaches the video track to the video element when the view is initialized.

- It detaches the video track when the component is destroyed.

Finally, let's see the code of the audio.component.ts file:

| audio.component.ts | |

|---|---|

| |

- Angular component decorator that defines the

AudioComponentclass and associates the HTML and CSS files with it. - The reference to the audio element in the HTML template.

- The audio track object, which can be a

RemoteAudioTrackor aLocalAudioTrack, although in this case, it will always be aRemoteAudioTrack. - Attach the audio track to the audio element when view is initialized.

- Detach the audio track when the component is destroyed.

The AudioComponent class is similar to the VideoComponent class, but it is used to display audio tracks. It attaches the audio track to the audio element when view is initialized and detaches the audio track when the component is destroyed.

Leaving the room#

When the user wants to leave the room, they can click the Leave Room button. This action calls the leaveRoom() method:

| app.component.ts | |

|---|---|

| |

- Disconnect the user from the room.

- Reset all variables.

- Call the

leaveRoom()method when the component is destroyed.

The leaveRoom() method performs the following actions:

- It disconnects the user from the room by calling the

disconnect()method on theroomobject. - It resets all variables.

The ngOnDestroy() lifecycle hook is used to ensure that the user leaves the room when the component is destroyed.

Specific mobile requirements#

In order to be able to test the application on an Android or iOS device, the application must ask for the necessary permissions to access the device's camera and microphone. These permissions are requested when the user joins the video call room.

The application must include the following permissions in the AndroidManifest.xml file located in the android/app/src/main directory:

The application must include the following permissions in the Info.plist file located in the ios/App/App directory: